May 20, 2024

By Louis DiPietro

From platforms that screen mortgage applications and résumés to predicting the likelihood defendants will re-offend, AI systems and the algorithms behind them are relied on to make quick and efficient decisions in areas with major consequences, including healthcare, hiring, and criminal justice.

Though the potential for AI is immense, its early adoption has been beset by recurring challenges: a home-loan processing algorithm was far more likely to deny applications from people of color than white applicants; hiring algorithms meant to screen applicants are increasingly being used without a hard look under the hood, and AI-powered software used by the U.S. criminal justice system was twice as likely to falsely predict future criminality in black defendants as white defendants.

Now more than ever – at the dawn of an artificial intelligence (AI)-assisted future, Cornell’s leadership in AI and in areas of ethics and fairness in technology is both guiding the development of better, fairer AI and shaping the minds of future AI innovators.

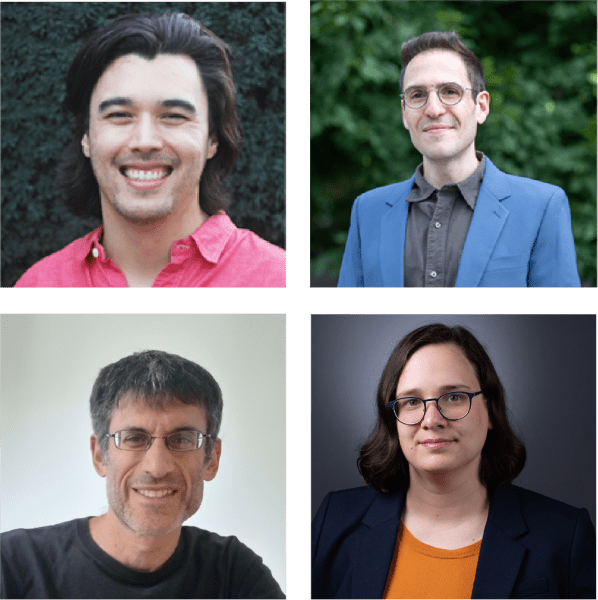

“In Cornell Bowers CIS, we're really benefiting from how close the connections are between areas that, on the surface, seem quite disparate – computer science, information science, statistics, economics, sociology, law, and science and technology studies,” said Jon Kleinberg ’93, the Tisch University Professor of Computer Science. “These areas have been brought together under Bowers CIS by this shared interest in the impact that technology is having on society.”

As a leader in developing responsible AI, Cornell Bowers CIS is uniquely positioned to guide tomorrow’s innovators as they dive deeper into issues of ethics, fairness, and privacy, while weighing the policy implications of technological advances. World-class research, collaboration, and education are written in Cornell Bowers CIS’s DNA. Formed decades ahead of its time in 1999, Cornell Bowers CIS was one of the first academic entities to foresee the coming technological age and then organize to meet it collaboratively, drawing on the expertise of pioneers in each of its three departments – computer science, information science, and statistics and data science.

“It is important to understand human processes to design AI responsibly,” said Emma Pierson, an assistant professor of computer science at the Jacobs Technion-Cornell Institute at Cornell Tech and the Technion, where she holds the Andrew H. and Ann R. Tisch Assistant Professor Chair, and a computer science field member in Cornell Bowers CIS. “AI has deep impact on human lives, and I want to teach students this first because I deeply believe that it's true, and also because I think it can broaden the universe of people who are interested in AI.”

Timely and important questions surrounding the consequences of technology in society, the efficacy of algorithms used in high-impact decisions, and how to leverage AI for the broadest, most beneficial impact are research hallmarks in the college.

And in the classroom, Cornell Bowers CIS undergraduate and graduate students at the university’s Ithaca and New York City campuses are learning what it means to build technology for the greatest good.

It wasn’t just one Cornell course for Hajar Sabir ’23, MPS ’24. An information science major, Sabir said understanding issues of ethics and fairness in technology were a central part of her education within Cornell Bowers CIS.

“My Cornell education helped me understand my role as an advocate for customers in the design process,” said Sabir, who is now an associate product manager at Capital One. “It informs my day-to-day conversations I have around product design, and as an entrepreneur, it taught me to be very intentional and transparent with whatever I put out there, whether that’s a product or a new business.”

Together, Kleinberg and Karen Levy, associate professor of information science with an appointment in Cornell Law School, co-teach an introductory Cornell course called Choices and Consequences in Computing (INFO 1260). They are a formidable duo: Kleinberg, a pioneer in computing and information science, MacArthur Fellow, and co-author of the literal textbook on Algorithm Design, whose research on algorithms, data mining, and networks has been cited by scientists nearly 130,000 times; and Levy, whose research explores digital technologies used to enforce rules and make decisions about people. Her work has been published widely, with mentions in the likes of New York Times and the Washington Post, and her recent book on surveillance in the trucking industry received critical acclaim.

The course is open to all majors every spring semester and draws more than 700 students across Cornell who are interested in data privacy, the fairness of algorithmic decision-making, and accountability in the design of computing systems.

Surveying the ethical, societal, and policy implications of computing and information science, Kleinberg and Levy cover a lot of ground – from disinformation, hate speech, and data collection to bias in algorithms, inequality, and the First and Fourth Amendments (the right to free speech and the prohibition of unlawful searches and seizures, respectively).

“I think we put a lot of hope in technology to solve social problems, and it's really hard to solve a social problem with a purely technical solution," Levy said. “We need to really understand the history and social context of a problem to begin to address it effectively.”

Technologists alone will not manifest AI’s potential for the greater good. It’s a sentiment Emma Pierson has molded into her own philosophy as a computer scientist, statistician, educator, and perpetual student of the world.

“We produce harmful algorithms if we’re not thinking about how to model social biases when training algorithms,” said Pierson, a leading researcher on AI, inequality, and healthcare. “For that reason, I think it's very important to form connections with fields outside of computer science. In my own philosophy, I just try and be curious and humble and accept that there are lots of things that I don't know.”

Named to Forbes 30 under 30 Science list and MIT’s 35 under 35 list, Pierson develops statistical and AI models to glean insights about inequality and healthcare hidden in large data sets. True to her philosophy around AI, she works closely with researchers traditionally outside of computer science to examine inequalities around pain, policing, and COVID-19, and has recently studied segregation in large cities and fair clinical prediction.

With Allison Koenecke, assistant professor of information science in Cornell Bowers CIS, Pierson co-teaches Practical Principles for Designing Fair Algorithms (CS 5382), a computer science course held at Ithaca and Cornell Tech where students learn how to develop and test algorithms that serve the greater good.

For Data Science for Social Change (CS 6382), a separate, doctoral-level course she leads, Pierson draws on her expertise in writing about technical analyses for general audiences in publications like the New York Times, FiveThirtyEight, the Atlantic, and many others.

“The purpose of the class is twofold: it's to teach students how to have a positive impact on the world through technical analysis,” she said, “and it's also to teach them how to communicate those analyses to a mass audience, which scientists are not always good at.”

Pierson joined the Jacobs Technion-Cornell Institute at Cornell Tech and Cornell Bowers CIS in 2021, lured by a collective charge to affect a greater good, she said.

“It’s clear that deeply embedded in the ethos of this place is an emphasis on social impact,” she said.

Nearly two and a half millennia since his time, Aristotle and his "virtue ethics" – in short, to live a life of good character – are every bit as relevant to budding statisticians as the technical skills they learn to build AI models, according to Elizabeth Karns, senior lecturer of statistics and data science at Cornell Bowers CIS and at the ILR School.

An epidemiologist and lawyer, Karns launched Integrated Ethics in Data Science (STSCI 3600), a seven-week course offered twice each spring semester, several years ago in response to what she viewed as a disconnect between statisticians and the high-powered, high-consequence statistical models they were being asked to build.

“I started thinking more about algorithms and how we are not preparing students sufficiently to be confronted with workplace pressures to just get the model done – ‘Put in the data, don't question it, and just use it,’” she said.

The problem, as she sees it, is that these models are largely unregulated, have no governing body, and thus skirt rigorous scientific testing and evaluation. Lacking such oversight, ethics and fairness, then, become a matter of discretion on the part of the statisticians developing the models; personal values and virtues are brought into the equation, and this is where Aristotle’s wisdom proves vital, she said.

“At this point in our lack of regulation, we need to depend on ethical people,” Karns said. “I want students to learn to pause and reflect before making decisions, and to ask, ‘How well does this align with my values? Is this a situation that could lead to problems for the company or users? Is this something I want to be associated with?’ That’s the core of the class.”

For the course, Karns – with the help of Cornell’s Center for Teaching Innovation (CTI) – developed an immersive video, “Nobody’s Fault: An Interactive Experience in Data Science Practice,” which challenges students to consider a moral conflict brought about by a bad model.

“I tell my students that we're going to be in situations in this class where there's not a clear right or wrong answer,” she said. “And that's the point – to struggle with that ambiguity now and get some comfort in that gray space. That way, when they get out into the workplace, they can be more effective.”

Cornell Bowers CIS’s leadership in ethics and fairness in technology has strengthened over the past year with the addition of two new faculty members – Michael Kim and Daniel Susser. Kim, an assistant professor of computer science, investigates foundational questions about responsible machine learning.

With Robert D. Kleinberg, professor of computer science in Cornell Bowers CIS, Kim co-teaches Introduction to Analysis of Algorithms (CS 4820).

Susser, an associate professor of information science, examines issues brought about by new and emerging data-driven technologies and the barriers that stand in the way of addressing them. With Gili Vidan, assistant professor of information science in Cornell Bowers CIS, Susser co-teaches Information Ethics, Law, and Policy (INFO 1200), an introductory course that investigates societal challenges of contemporary information technology. He also leads a graduate seminar and colloquium series on Ethics and Politics in Computing.

“I think it takes a full-court press of different disciplines, ways of thinking and understanding of people with different backgrounds and life experiences to really understand fully the impact that technology will have and to try to mitigate its negative effects and amplify its positive effects,” Levy said. “What I try to teach students is not what the right answers are, but what the right sorts of questions are. Those are more portable things that they can take with them into their careers.”

Louis DiPietro is a writer for the Cornell Ann S. Bowers College of Computing and Information Science.