December 1, 2022

By Patricia Waldron

In the 2000s, Owolabi Legunsen, assistant professor of computer science, was a software engineer at a Nigerian company, primarily writing code for a finance company. Nigeria is now home to a booming tech sector with million-dollar startups, but at the time, most people working with computers did sales or IT. Legunsen knew he was lucky to have a software engineering job, but at the same time, “I was often writing bugs that sometimes contained software,” he said. “I always thought to myself, there’s no way Google, Microsoft, or Facebook would do software engineering like this.”

To improve, Legunsen enrolled in a computer science graduate program at the University of Texas at Dallas, where he got his first taste of research. He realized, “this is it. I want to be there at the forefront, creating new knowledge for better software engineering.” He went on to earn a Ph.D. at the University of Illinois at Urbana-Champaign and joined the computer science faculty at the Cornell Ann S. Bowers College of Computing and Information Science in 2020.

Now, Legunsen’s work touches on different aspects of software engineering, but his main focus is on developing new methods for testing and validating code.

Bugs – errors and flaws in software that cause unexpected results – can have terrible costs. To give just a few examples, bugs have brought down social media platforms, disrupted trading on the stock market, and precipitated deadly plane crashes. Bugs are also a massive drain on the economy. A report from the Consortium for Information and Software Quality (CISQ) found that buggy software cost the U.S. economy $2.08 trillion in 2020, or about 10% of the GDP.

In the last two years, Legunsen and his collaborators have published two innovative ways to test software that fill major holes in the arsenal of existing methods.

“What we’re doing is identifying gaps in how people can test software today and the kinds of bugs that escape that testing process.” Legunsen said. “When we find those gaps, we invent new types of tests that can help us find bugs earlier and reduce these huge costs.”

New layers of testing

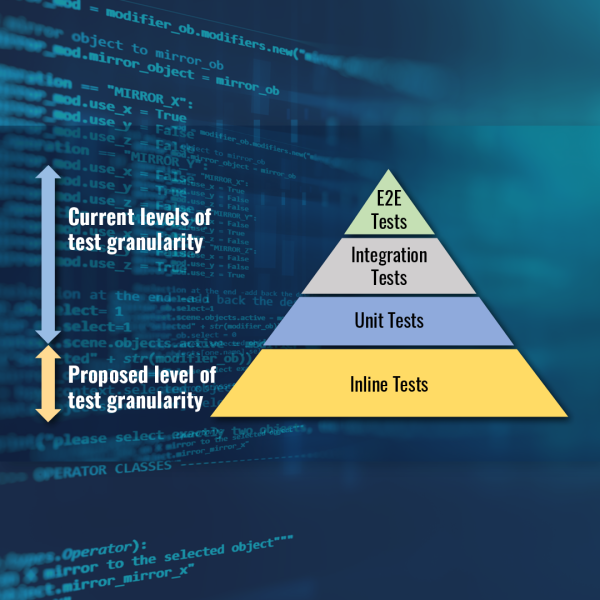

Working with colleagues at the University of Texas at Austin, Legunsen helped develop inline tests, a method for checking smaller portions of code than has previously been possible. Inline tests allow software developers to test individual program steps, called statements. Or in other words, given an input, what does the statement yield as an output, and does the output match what was expected?

“Many times, developers just want to check, ‘what does this statement compute?’ and right now, there is no framework-level testing support for answering that question,” Legunsen said.

Previously, the lowest level of software testing was a unit test, which checks individual functions that can include multiple program statements. The next level up is integration tests, which determine if independent units interact correctly. Finally, there is end-to-end testing, which takes the broadest view and checks whether the software is meeting all its requirements from beginning to end. Together, these three types of software testing form the classic “software testing pyramid” featured in textbooks.

With the creation of inline tests, Legunsen’s team adds a new base to the pyramid. It provides a checkpoint for each statement, and allows programmers to test their code more cleanly and effectively than existing ad hoc workarounds. Furthermore, the inline tests are recorded alongside the source code, providing documentation of what each statement is doing.

Legunsen compares inline tests to giving multiple quizzes over the course of a semester instead of one final exam – it’s a way to catch small problems early on. Studies have shown that errors at the statement level are common, but are rarely found with available testing methods, such as unit tests. The team discovered some statement-level bugs during their research. They were reported to the engineers of those systems, who immediately fixed them.

In the initial study, nine users tried out the inline testing framework for the Python programming language and uniformly reported that it was easy and helpful to use. Legunsen’s group has also developed an inline testing framework for Java and plans to extend the capability to other programming languages. The code is publicly available.

Testing beyond the code

Legunsen’s interest in software testing extends beyond the code itself. In a recent paper, he and colleagues at the University of Illinois at Urbana-Champaign developed ctests, a method for testing software configurations – “knobs” that are outside the code, which can be tuned to control how software works. Configurations can include the number of servers to use when deploying software on a cluster of servers, the number of backup servers, and the bandwidth for sending data back and forth.

Cloud and internet services make hundreds or thousands of configuration changes each day, which can cause failures that result in service disruptions.

For example, a configuration change modifies the number of threads to be used in one system module from 10 to five – a valid change for that module. However, the system would crash because a dependent module requires at least six threads to run. Ctests helped find this problem before it manifested.

Previous work by Legunsen and others showed that configuration-related failures most often occur when valid configuration changes uncover underlying bugs in the software.

Ctests lets users try out new configurations to see how they interact with the code, to find those bugs before they cause a crash or exhibit wrong behavior when deployed under the new settings.

“Essentially, a ctest creates a connection between the configurations that I may set in the future, and the code that I may run on those configurations,” Legunsen said. “That link was missing before we did this work.”

In a broader sense, it also opens up a channel of communication between the software engineers who write the code and the system admins who turn the knobs. Before ctests, code and configurations were tested separately.

In their analysis, the team found ctests to be highly effective. They evaluated the approach on five software systems that are widely used in the cloud by making failure-inducing configuration changes and misconfigurations. Ctests helped them find scores of actual bugs in the tested systems, which they reported. Ultimately, those bugs were confirmed and fixed by the engineers who designed the software, showing that ctests is already leading to improvements.

One drawback of ctests is that it is expensive, in terms of time and computing power. However, other researchers working with the system have now published award-winning work that speeds up ctest usage so that it detects bugs faster and consumes less computing power.

Legunsen hopes that others will continue to adopt the testing method.

“We’re hoping that ctests fuels a shift in the paradigm, to where, whenever you want to change your configurations, you first run ctests,” Legunsen said. “When your ctests pass, you gain more confidence to deploy the software than you would have without ctests.”

Legunsen has also started tackling this problem from the other direction, starting with the code. He and others showed in a follow-up study that the sheer amount of research on detecting misconfigurations is likely symptomatic of the fact that configuration design and implementation has not received sufficient attention as a first-class software engineering activity. If he can find better ways to design these systems in the first place, he thinks he can reduce the odds that the software can be misconfigured.

Overall, through his work at Cornell Bowers CIS, Legunsen aims to shift the way that software engineers design and test their software and to help others to do so. “I’m really happy now to teach other people how to do that research – to design better software and improve the testing landscape.”

The research described above was partially supported by Google, Meta, Microsoft, and the U.S. National Science Foundation.

Patricia Waldron is a writer for the Cornell Ann S. Bowers College of Computing and Information Science.